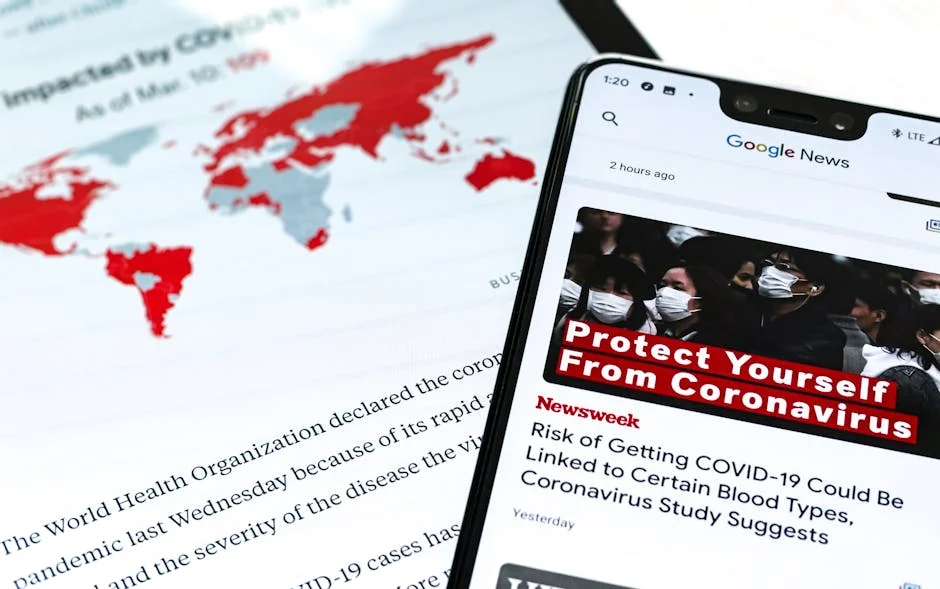

YouTube has introduced a new tool enabling public figures to report fake videos, addressing growing concerns over deepfakes and misinformation. The feature, rolled out globally, aims to empower creators and celebrities to flag AI-generated content that could harm their reputations. The move comes as deepfake technology proliferates, with reports indicating a 300% increase in synthetic media threats over the past year. For Singaporean markets and businesses, the development raises questions about digital trust, regulatory responses, and the economic implications of content moderation.

YouTube's New Tool: A Response to Rising Deepfake Threats

The tool allows public figures to submit reports directly through their Google accounts, with automated systems prioritizing cases involving impersonation or malicious intent. YouTube’s transparency report highlights that 78% of deepfake content in 2023 targeted politicians, celebrities, or business leaders, underscoring the urgency of the measure. The company cited a partnership with cybersecurity firms to enhance detection algorithms, though critics argue the focus remains on high-profile users rather than everyday content.

Industry analysts note that the tool aligns with broader efforts by tech giants to mitigate AI risks. However, concerns persist about enforcement consistency. A 2022 study by the Singapore Institute for International Affairs found that 62% of local businesses fear reputational damage from synthetic media, suggesting the tool’s limited scope may not address systemic vulnerabilities.

Market Reactions: Tech Stocks and Investor Confidence

Following the announcement, shares of Alphabet Inc. (Google’s parent company) rose 1.2%, reflecting investor optimism about proactive risk management. However, some analysts caution that the tool’s effectiveness remains unproven. “This is a step forward, but it doesn’t solve the underlying issue of AI-driven misinformation,” said Dr. Lim Wei, a tech policy researcher at Nanyang Technological University. “Investors should monitor how YouTube balances content moderation with user growth.”

The move also impacts advertising revenue models. Brands increasingly demand assurances that their ads appear alongside authentic content. A 2023 survey by the Singapore Advertising Association revealed that 45% of advertisers would reconsider partnerships with platforms failing to combat deepfakes, potentially affecting YouTube’s $25 billion ad revenue stream.

Business Implications: Brand Protection and Digital Trust

For Singapore-based businesses, the tool offers a mechanism to safeguard reputations but raises questions about access and equity. Smaller companies without dedicated legal teams may struggle to navigate the reporting process. “Public figures have resources; the average user does not,” said Tan Mei Ling, CEO of a local digital marketing firm. “This could deepen the trust gap between large corporations and consumers.”

The tool also intersects with Singapore’s Personal Data Protection Commission (PDPC) guidelines, which mandate strict data governance. Businesses must now evaluate whether YouTube’s measures align with their own cybersecurity protocols. Compliance experts warn that failure to address synthetic media risks could lead to regulatory scrutiny under the 2021 Personal Data Protection (Amendment) Act.

Investment Perspective: Cybersecurity and Content Moderation

The development underscores growing investment in AI detection technologies. Singapore’s Cyber Security Agency (CSA) reported a 50% surge in funding for deepfake countermeasures in 2023, with startups like Aether AI securing $12 million in venture capital. Investors are increasingly viewing content moderation as a critical sector, with the global market for AI moderation tools projected to reach $4.8 billion by 2026.

However, the tool’s reliance on automated systems risks false positives, potentially stifling legitimate content. This has prompted calls for human oversight. “Automation is efficient, but it lacks nuance,” said Rajiv Patel, a venture capitalist specializing in tech. “Investors should prioritize companies that combine AI with ethical review processes.”

Economic Impact: Trust in Digital Platforms and Advertising Revenue

Trust in digital platforms remains a cornerstone of Singapore’s digital economy, which contributed 12% to the nation’s GDP in 2022. The tool’s success could bolster confidence, but its limitations may exacerbate existing challenges. A 2023 report by the Monetary Authority of Singapore (MAS) warned that misinformation could erode consumer trust, leading to a 5-10% decline in e-commerce transactions if left unchecked.

For advertisers, the tool’s impact is mixed. While it reduces risks of brand association with fake content, it also increases operational costs for content verification. Businesses must now allocate budgets for monitoring tools or third-party audits, adding to overheads. “This is a double-edged sword,” said Fiona Koh, a finance analyst at OCBC Bank. “It’s a cost now, but a potential savings in reputational damage later.”

What’s Next for YouTube and Content Moderation?

YouTube’s tool is part of a broader industry trend, with Meta and TikTok also rolling out similar features. However, the absence of a unified regulatory framework complicates enforcement. Singapore’s proposed Digital Services Act, expected to pass in 2024, may impose stricter obligations on platforms, forcing YouTube to adapt further.

Investors and businesses should monitor how the tool evolves. Key indicators include adoption rates among public figures, reduction in reported deepfake incidents, and regulatory responses. As AI technology advances, the economic stakes of content moderation will only grow, making this a critical area for ongoing scrutiny.

Frequently Asked Questions

What is the latest news about youtube launches reporting tool for public figures amid deepfake surge?

YouTube has introduced a new tool enabling public figures to report fake videos, addressing growing concerns over deepfakes and misinformation.

Why does this matter for economy-business?

The move comes as deepfake technology proliferates, with reports indicating a 300% increase in synthetic media threats over the past year.

What are the key facts about youtube launches reporting tool for public figures amid deepfake surge?

YouTube's New Tool: A Response to Rising Deepfake Threats The tool allows public figures to submit reports directly through their Google accounts, with automated systems prioritizing cases involving impersonation or malicious intent.